Cybersecurity researchers have disclosed three security vulnerabilities impacting LangChain and LangGraph that, if successfully exploited, could expose filesystem data, environment secrets, and conversation history.

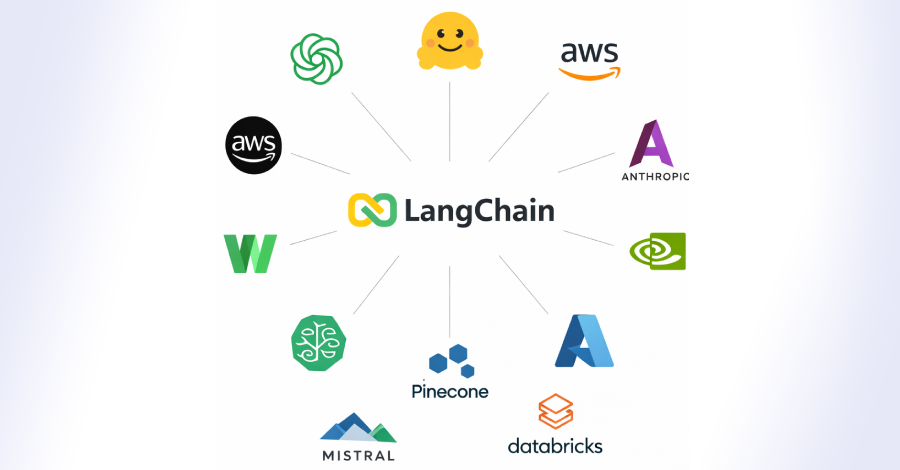

Both LangChain and LangGraph are open-source frameworks that are used to build applications powered by Large Language Models (LLMs). LangGraph is built on the foundations of LangChain for more sophisticated and non-linear agentic workflows. According to statistics on the Python Package Index (PyPI), LangChain, LangChain-Core, and LangGraph have been downloaded more than 52 million, 23 million, and 9 million times last week alone.

“Each vulnerability exposes a different class of enterprise data: filesystem files, environment secrets, and conversation history,” Cyera security researcher Vladimir Tokarev said in a report published Thursday.

The issues, in a nutshell, offer three independent paths that an attacker can leverage to drain sensitive data from any enterprise LangChain deployment. Details of the vulnerabilities are as follows –

- CVE-2026-34070 (CVSS score: 7.5) – A path traversal vulnerability in LangChain (“langchain_core/prompts/loading.py”) that allows access to arbitrary files without any validation via its prompt-loading API by supplying a specially crafted prompt template.

- CVE-2025-68664 (CVSS score: 9.3) – A deserialization of untrusted data vulnerability in LangChain that leaks API keys and environment secrets by passing as input a data structure that tricks the application into interpreting it as an already serialized LangChain object rather than regular user data.

- CVE-2025-67644 (CVSS score: 7.3) – An SQL injection vulnerability in LangGraph SQLite checkpoint implementation that allows an attacker to manipulate SQL queries through metadata filter keys and run arbitrary SQL queries against the database.

Successful exploitation of the aforementioned flaws could allow an attacker to read sensitive files like Docker configurations, siphon sensitive secrets via prompt injection, and access conversation histories associated with sensitive workflows. It’s worth noting that details of CVE-2025-68664 were also shared by Cyata in December 2025, giving it the cryptonym LangGrinch.

The vulnerabilities have been patched in the following versions –

- CVE-2026-34070 – langchain-core >=1.2.22

- CVE-2025-68664 – langchain-core 0.3.81 and 1.2.5

- CVE-2025-67644 – langgraph-checkpoint-sqlite 3.0.1

The findings once again underscore how artificial intelligence (AI) plumbing is not immune to classic security vulnerabilities, potentially putting entire systems at risk.

The development comes days after a critical security flaw impacting Langflow (CVE-2026-33017, CVSS score: 9.3) has come under active exploitation within 20 hours of public disclosure, enabling attackers to exfiltrate sensitive data from developer environments.

Naveen Sunkavally, chief architect at Horizon3.ai, said the vulnerability shares the same root cause as CVE-2025-3248, and stems from unauthenticated endpoints executing arbitrary code. With threat actors moving quickly to exploit newly disclosed flaws, it’s essential that users apply the patches as soon as possible for optimal protection.

“LangChain doesn’t exist in isolation. It sits at the center of a massive dependency web that stretches across the AI stack. Hundreds of libraries wrap LangChain, extend it, or depend on it,” Cyera said. “When a vulnerability exists in LangChain’s core, it doesn’t just affect direct users. It ripples outward through every downstream library, every wrapper, every integration that inherits the vulnerable code path.”

📰 Original Source:TheHackerNews

✍️ Author: info@thehackernews.com (The Hacker News)